The Internet of Things (IoT) has become commonplace in almost every home and office. We use it to turn on the lights, connect our devices to satellites broadcasting streaming media, or place orders for grocery delivery. But the IoT has also quickly become an integral part of supply chains. It provides us with the advantage of using systems already in place, such as our smartphones or supply chain execution platforms, to expand our capabilities for more responsive and manageable supply networks.

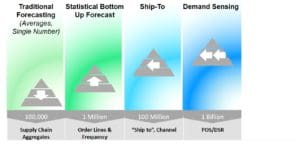

In 2016, an independent research firm [1] predicted that 8.4 billion connected things would be in use worldwide in 2017, but would continue to grow to over 20.4 billion by 2020. However, according to statista.com, the number of connected IoT devices has already reach 23.14 billion.

The most important feature of any smooth-running supply chain is visibility; meaning full visibility with the capability to control the supply chain from your vantage point. A robust control tower technology solution utilizes extensive digital data in multiple ways, allowing companies to see the full supply chain picture and equipping them with tools to act immediately upon these data points.

Supply chains are prime candidates for IoT applications because they have so many moving parts (literally). Suppliers, brokers, carriers, and drivers are among the third parties that have a hand in your fast-moving supply chain, and they need to collaborate with the manufacturer, suppliers, DCs, retailers, and customers as products are being produced, packed, and shipped across borders.

Technologies are already in place to manage disparate parts of the supply chain, but IoT applications can actually bring those disparate parts together to create an information ecosystem that benefits all its participants. IoT technologies are quite distinct from the information technology tools being used today because they provide real-time information that can feed into many areas of the supply chain.

This is easily realized in the area of in-transit shipment visibility, where black holes have existed for many years. Ocean and air carriers have the sophisticated technology to provide updates on your shipments, but these are often long-range milestones. When it comes to over-the-road or LTL shipments, there is a huge gap in data capabilities. But this is an area of focus in the consumer-driven marketplace of today.

Transportation management is one is the principal areas where connectivity and data feeds can help make informed decisions that can have a massive financial impact on a supply chain operation.

There are real cost savings and service improvement benefits from IoT data. Visibility into milestones like current status, location, warehouse stage, transit times, costs, temperature, delivery information, POD documentation, and more, has allowed shippers to improve shipping operations with the ability to capture data for historical analytics, improving the future movement of goods. By integrating this data into an existing transportation management solution, timely decisions can be made and updates can be provided to customers instantly.

Real-time information is possible with modern technology, and companies that leverage technology solutions that provide comprehensive, multimodal data in a consolidated platform will be leading the pack. Utilizing IoT and digital supply chain management platforms has moved far beyond looking at only ocean carrier milestones. With this new level of end-to-end shipment visibility, shippers can instantly access transit times from every carrier along the route to create efficiencies and increase communication within their organizations – ultimately providing the highest level of customer satisfaction. Your company’s track-and-trace capabilities have been enhanced to become a multi-mode control tower visibility platform.

Leveraging a global trade management solution that provides multi-mode in-transit visibility functionality that connects importers and exporters with their overseas suppliers, logistics providers, brokers, and carriers is the new imperative. The platform needs to include a global trading network with pre-established connectivity to thousands of trading partners, along with secure data communication services that enable supply chain partners to share information, distribute reports, and receive alerts on milestones that are critical to the timely delivery of goods. With this visibility and connectivity across the supply chain, organizations can reduce cross-border trade risks while improving supply chain responsiveness.

The network data is greatly enhanced when an API-based integration layer is used to create carrier connectivity and next-generation data standardization from third-party providers like project 44 and others. This level of modern connectivity includes the use of data from IoT devices to replace outdated mechanisms like EDI and/or SMC3 rate bureaus, FTP, spreadsheets, website scraping, and manual processes (phone, email, fax).

With an end-to-end view, real-time data from a sophisticated network, and historical analytics, shippers can achieve greater efficiencies and cost savings. Agility – the most desirable trait in today’s supply chains – is achieved with the ability to instantly access shipment tracking details and take action to avoid delays or reduce costs. All of these activities are available directly within the Amber Road platform, which translates into seamless, instant and error-free data sharing between suppliers, carriers and customers.

Learn more about the path to a digital supply chain execution platform during Amber Road’s webinar with ARC Advisory Group’s Clint Reiser on October 24: Building a Digital Supply Chain is Like Hosting a Potluck: What You Need to Know.

——-

[1] Gartner, Inc.: Forecast: Internet of Things — Endpoints and Associated Services, Worldwide, 2016